This essay is part of a series on AI where I explore what it means for everyday people like you and me.

In his book The Shortest History of AI, Toby Walsh says that artificial intelligence began in 1956. This really tickled me because I was also born in 1956, and I like to think it was a special year.

In June 1956, a group of scientists and mathematicians met at a workshop in Dartmouth (USA) to discuss building a machine that could think. This wasn’t the first time people had tackled this question, but it was around this time that computers had advanced to the point of being useful for this purpose. Before that, you merely needed an exceptional mind, such as the one belonging to the amazing British mathematician, Alan Turing.

Turing was a genius who played a central role in cracking the German Enigma Code in WWII, leading the development of a machine called the Bombe. On the question of whether machines can think, Turing believed that if a user was unable to tell whether they were interacting with a person or a machine, then we should assume that machines can think. This is frequently referred to as ‘The Turing Test’ and is the reason the movie about Turing’s life is called The Imitation Game.

Sadly, Turing took his own life in 1954 at the age of 41, following his prosecution for homosexual acts.

In 2013, Queen Elizabeth II granted him a posthumous royal pardon, and he has since become a celebrated symbol of both scientific genius and the fight for LGBTQ+ rights. I feel sure he would have been invited to the Dartmouth workshop if he had still been around. It would be like getting all the world’s leading theoretical physicists together to discuss the theory of relativity, and not inviting Einstein.

During the late fifties and early sixties, mathematicians and computer scientists were wildly optimistic about what AI could do, and predicted that it would reach human level capability within 20 years. As a result, governments poured money into research, but the results were disappointing. Early computers could not cope with ambiguity and could not use common sense. (In my view, these traits are still evident today).

In 1973, a UK mathematician named Sir James Lighthill published an influential government report that was deeply critical of AI research. He concluded that ‘progress had been grossly over-estimated and that AI had failed to deliver almost every major promise’. This caused the British Government to slash funding and other countries followed suit.

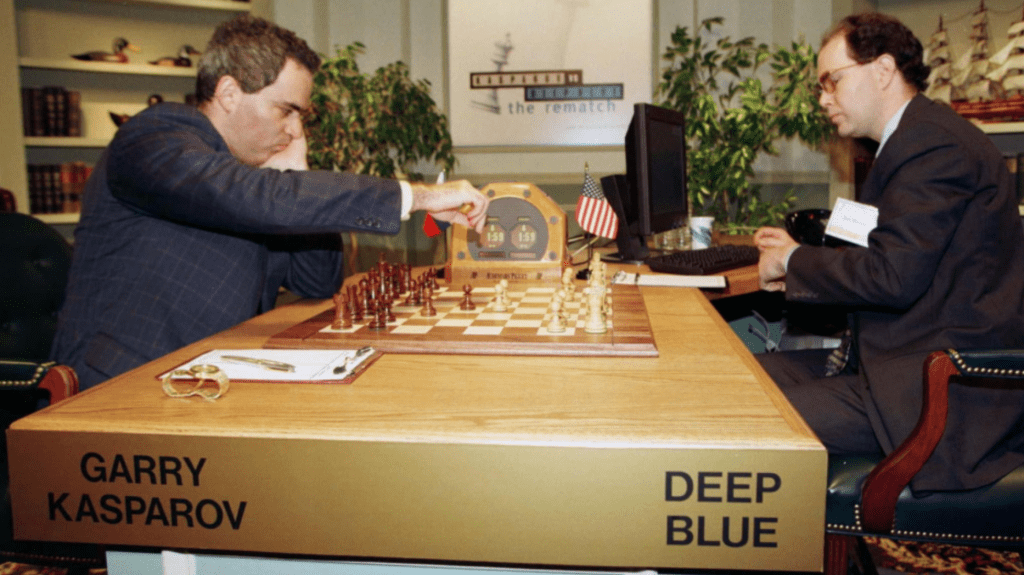

But computer scientists all over the world were still fascinated with the idea of developing a machine that could outsmart the greatest human minds. During the 1980s the world’s most famous chess player, Garry Kasparov, was gifted his first computer from a London-based company. He was one of the first people to own a computer in the Soviet Union.

On February 10, 1996, Kasparov lost the first game of a six-game match against Deep Blue — an IBM computer capable of evaluating 100 million moves per second. It was the first time a reigning world champion had lost to a computer under proper tournament conditions. However, Kasparov recovered and won the overall match 4–2, taking home the $400,000 prize.

IBM improved Deep Blue significantly for the rematch — upgrading its databases, hiring additional grandmasters, and developing methods to disguise the computer’s strategy. In 1997, Deep Blue defeated Kasparov, becoming the first computer to beat a reigning world champion in a full classical match.

Kasparov was gracious but stunned. He said: “For the first time in the history of mankind, I saw something similar to an artificial intellect.”

In 1997, we had our own chess enthusiast at home. Encouraged by his father and grandfather, our eleven-year-old had become fascinated by the game and joined a chess club — one that played its matches entirely by post. You made your move, posted it off, and waited for your opponent’s reply to arrive in your letterbox. It seems quite funny to me now. Two weeks for a single move! But he loved it — and what child doesn’t love getting a letter?

By the early 2000s, AI had developed the ability to ‘learn from data’ — a concept known as machine learning. Rather than programming a computer with explicit rules, you feed it data and let it find patterns by itself. For example, if you feed it thousands of emails labelled “spam” or “not spam”, it will learn to spot spam with a reasonable level of accuracy. If you feed it your Netflix viewing history, it will learn what to recommend next. I’m sure we are all familiar with the concept of computers ‘reading our minds’ in some kind of magical way, but in reality, we are just teaching them what we like and don’t like.

By contrast, Generative AI (for example ChatGPT) creates new content. Rather than just analysing and predicting, it can produce original text, images, and ideas from scratch. Released by the OpenAI organisation in 2022, ChatGPT gained 100 million users in just 2 months and was the fastest growing app in history. OpenAI was co-founded by Sam Altman (who is still the CEO) and Elon Musk (boo).

These tools can write essays, stories, project plans, and research papers, produce working code, and hold surprisingly sophisticated conversations. But let’s be clear: they are not actually thinking. They generate the most likely response based on vast amounts of training data — the internet, books, research papers — without any genuine understanding of what they’re saying.

Most people encounter AI through one of three tools: ChatGPT, Claude, or Gemini. They work in broadly similar ways, though each has its own ‘personality’. I use Claude, which has a reputation for being the most empathetic of the three — a fact it demonstrates by regularly telling me I’m doing an excellent job. Charming at first, but annoying in the long term. I sometimes feel like I’m having a conversation with Mr Collins, the fawning vicar in Pride and Prejudice. To be honest, I could probably ask it to be less obsequious, but I guess a little flattery doesn’t hurt.

The other important thing to remember is that AI makes mistakes. This is known as hallucinating and means if they don’t know the answer, they will make one up.

I recently hosted a book club meeting about the book Wild Dark Shore by Charlotte McConaghy. I read the book last year and was struggling to remember some of the plot lines, so I asked Claude to remind me what happened.

Here’s a transcript of our conversation:

Me: In the book Wild Dark Shore by Charlotte McConaghy, why did Dom lock Hank in the cellar?

Claude: In Wild Dark Shore, Dom locks Hank in the cellar because he discovers that Hank has been killing the island’s wildlife — specifically the seabirds that Dominic is desperately trying to protect and study on the remote island. Hank’s violence toward the animals poses a serious threat to the ecosystem Dom has dedicated himself to preserving, and Dom feels he has no other way to restrain or control him given their isolated situation with no outside help available.

That said, I want to be transparent: my knowledge of this specific novel’s plot details may not be fully reliable. If this doesn’t match what you’ve read, I’d trust the book over my recollection — it’s worth double-checking, as I may be misremembering or conflating details.

Me: What you said is wrong. Why didn’t you just say you didn’t know?

Claude: You’re right, and I apologise. I should have just said I don’t know rather than fabricating plot details that sounded plausible. That’s more harmful than admitting ignorance, because it gives you false information with false confidence.

I’m not familiar enough with the specific details of Wild Dark Shore to answer your question accurately. If you’re curious about the reason, the book itself would be the best source!

Me: I don’t have a copy of the book, or I would do that!

It can be very frustrating using AI and you need the ability to think critically. It’s easy to see how people can be misled and misinformed.

Computer scientist are working hard to reduce hallucination rates by forcing the tools to look at specific verified documents before they answer, and training them to express uncertainty when don’t know the answer. This makes them sound even more human (assuming you converse with people who can admit they are wrong.)

I often wonder what Alan Turing would have made of all this. Turing proposed the imitation game in 1950 partly because colleagues told him machines could never convincingly converse like humans. Claude does exactly that — millions of people daily genuinely can’t tell they’re talking to a machine. I frequently need to remind myself not to thank Claude for its help. After all, I don’t thank the toaster or the oven when they cook something.

But maybe I should.

This essay is the first in a series on AI. Future essays will focus on the impact of AI on books and writing, the impact on the environment, and how to talk to AI.